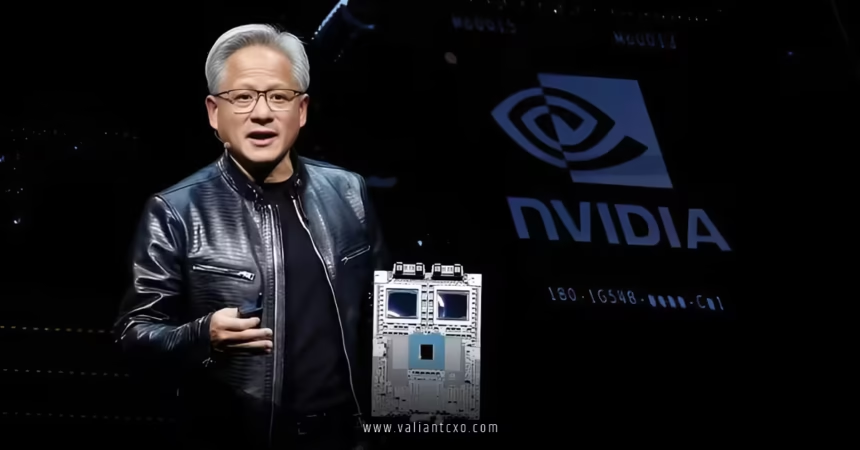

NVIDIA Blackwell GPU performance and adoption have taken center stage in the AI world, delivering jaw-dropping leaps in speed, efficiency, and scale that leave the previous Hopper generation in the dust. If you’ve been tracking the explosive growth in artificial intelligence, you know this isn’t just hype—it’s the hardware fueling trillion-parameter models, real-time agents, and massive data center buildouts.

Picture this: training a giant language model used to feel like pushing a boulder uphill. With Blackwell, it’s more like strapping on a jetpack. These GPUs aren’t incremental upgrades; they’re architectural game-changers designed for the agentic AI era, where models don’t just respond—they act autonomously. And the numbers? They’re staggering. Let’s break down why NVIDIA Blackwell GPU performance and adoption are rewriting the rules of computing, with a direct tie-back to the record-shattering NVIDIA Q4 FY2026 earnings report data center revenue.

What Makes NVIDIA Blackwell GPU Performance So Revolutionary?

At its core, the Blackwell architecture packs insane density and smarts. A flagship like the B200 GPU boasts 208 billion transistors—more than double Hopper’s count—built on a custom TSMC 4NP process. It’s essentially two massive dies fused together via a blazing-fast 10 TB/s NV-HBI link, acting as one seamless beast.

Key specs that drive the wow factor:

- Memory upgrades — Up to 192 GB of HBM3e on the B200 (or even 288 GB on Blackwell Ultra variants like GB300), with bandwidth soaring to 8 TB/s. Compare that to Hopper’s H100 at 80 GB and ~3.35 TB/s. This means handling bigger models without constant swapping, slashing latency and boosting throughput.

- Tensor Core magic — Fifth-generation Tensor Cores introduce native FP4 and FP6 precision support. FP4 doubles throughput over FP8 while keeping accuracy high thanks to micro-tensor scaling in the second-gen Transformer Engine.

- Peak performance jumps — A single B200 hits around 20 petaFLOPS in FP4, roughly 4-5x what Hopper delivered in equivalent workloads. For FP16/BF16, we’re talking 2.25 petaFLOPS dense (up to 4.5 petaFLOPS sparse)—a clear 2x+ leap.

But raw specs only tell half the story. Real-world NVIDIA Blackwell GPU performance shines in benchmarks.

NVIDIA Blackwell GPU Performance vs. Hopper: Head-to-Head Breakdown

Let’s get concrete. Independent tests and MLPerf results paint a clear picture:

- Training speed — Blackwell systems deliver up to 3x faster training on massive models like Llama 3.1 405B compared to Hopper. In some cases, B200-based setups finish tasks 57% faster than H100 equivalents, thanks to larger batch sizes enabled by that extra memory.

- Inference breakthroughs — This is where Blackwell really flexes. GB200 NVL72 racks achieve up to 30x faster real-time inference for trillion-parameter LLMs versus Hopper. Per-GPU throughput on Llama 3.1 405B hits 3.4x higher than H200 systems. For agentic AI, Blackwell Ultra pushes 50x better performance per megawatt and 35x lower cost per token.

- Efficiency edge — Energy usage drops dramatically—up to 25x reduction in some scenarios—making these GPUs greener for hyperscale deployments. Attention layers get 2x acceleration on Ultra variants, critical for the reasoning-heavy workloads exploding right now.

Think of Hopper as a powerful sports car. Blackwell? It’s a hypercar with rocket boosters—faster, more efficient, and built to handle the twists of next-gen AI without breaking a sweat.

These performance gains directly fueled the massive surge in NVIDIA Q4 FY2026 earnings report data center revenue, which hit a record $62.3 billion (up 75% year-over-year). Blackwell ramp-up was a huge driver, as customers raced to deploy these chips for everything from cloud AI services to enterprise agents.

Widespread NVIDIA Blackwell GPU Adoption Across Industries

Adoption isn’t just happening—it’s accelerating at warp speed. In Q4 FY2026, hyperscalers still claimed slightly over 50% of data center sales, but the real story? Explosive growth from everyone else.

- Hyperscalers leading the charge — Amazon, Meta, Microsoft, Google, and others are snapping up Blackwell systems. Cloud capacity sold out fast, with massive clusters like Microsoft’s Azure GB300 NVL72 deployments (thousands of GPUs linked as one giant accelerator).

- Enterprises jumping in — Companies aren’t waiting. Adoption of AI agents is “skyrocketing,” per CEO Jensen Huang. Enterprises build private clouds for RAG systems, custom models, and internal agents. Partnerships like Palantir accelerate this shift.

- Sovereign and specialized users — Nations launch their own AI infrastructure. Robotics, telecom, and even quantum-hybrid systems adopt Blackwell for edge-to-cloud scale.

- Full-stack appeal — It’s not just GPUs. NVLink fabrics, Grace CPUs in GB200 superchips, and software like TensorRT-LLM optimize everything. This ecosystem lock-in drives stickiness.

The result? Diversified demand beyond hyperscalers, making growth feel more resilient. Blackwell’s sold-out status in clouds underscores urgency—customers can’t get enough.

Challenges and the Road Ahead for NVIDIA Blackwell GPU Performance and Adoption

No revolution is without hurdles. Supply constraints linger as demand outpaces production. Geopolitical export rules limit some markets. Competition from custom ASICs exists, though NVIDIA’s CUDA dominance and software edge keep it ahead.

Looking forward, Blackwell Ultra extends leadership, and the upcoming Rubin architecture promises even more. Management hints at conservative earlier projections for hundreds of billions in Blackwell/Rubin revenue through 2026—reality might exceed them.

Why NVIDIA Blackwell GPU Performance and Adoption Matters Right Now

In short, NVIDIA Blackwell GPU performance and adoption aren’t side notes—they’re the engine behind the AI boom. From 4x training gains to 30-50x inference leaps, these GPUs make previously impossible workloads routine. That momentum powered the blockbuster NVIDIA Q4 FY2026 earnings report data center revenue of $62.3 billion, proving enterprises and clouds are all-in on accelerated computing.

If you’re investing, building AI systems, or just geeking out on tech, Blackwell is the platform defining the decade. The agentic wave is here, and NVIDIA’s hardware is making it real. Excited yet? The best part: this is only the beginning.

Here are three high-authority external links for deeper reading:

- NVIDIA Blackwell Architecture Official Page

- NVIDIA Developer Blog on Blackwell Performance

- NVIDIA Investor Relations Financial Reports

FAQs

What sets NVIDIA Blackwell GPU performance apart from previous generations?

NVIDIA Blackwell GPU performance delivers up to 4x faster training and 30x inference gains over Hopper, thanks to FP4 precision, massive HBM3e memory (up to 288 GB), and 8 TB/s bandwidth.

How has NVIDIA Blackwell GPU adoption impacted recent earnings?

Widespread NVIDIA Blackwell GPU adoption by hyperscalers, enterprises, and sovereigns drove the record NVIDIA Q4 FY2026 earnings report data center revenue of $62.3 billion, up 75% year-over-year.

Which industries are leading NVIDIA Blackwell GPU adoption?

Hyperscalers hold over 50%, but fastest growth comes from enterprises deploying agents, AI model builders, telecom, robotics, and governments building national AI clouds.

What real-world benchmarks highlight NVIDIA Blackwell GPU performance?

MLPerf shows up to 3.4x per-GPU inference on Llama 3.1 405B, 50x throughput per megawatt on Ultra variants, and 3x training speedups versus Hopper equivalents.

Is NVIDIA Blackwell GPU adoption sustainable beyond 2026?

Yes—diversifying customers, software optimizations extending hardware life, and upcoming Rubin architecture suggest continued momentum tied to exponential AI demand.